Imagine you’re a surgeon halfway through a complex spinal procedure. You can’t stop. You can’t flip through a reference manual. And yet, right there in your field of vision, a precise 3D overlay of the patient’s MRI scan is guiding your every incision: no screen, no assistant holding up a monitor, just seamless information fused with reality. That’s not a scene from a sci-fi film. That’s happening right now in operating rooms using Microsoft HoloLens and surgical AR platforms like Medivis and Proprio.

Healthcare has always been an industry where the stakes of bad UX are brutally high. A confusing medication interface can lead to dosing errors. A cluttered clinical dashboard can cause a nurse to miss a critical alert. Now, as augmented reality begins to move from prototype labs into real clinical environments, the UX decisions being made today will shape whether this technology saves lives or creates terrifying new failure points. No pressure, right?

The global AR in healthcare market was valued at approximately $1.7 billion in 2022, and analysts at Grand View Research project it will grow at a compound annual growth rate of over 30% through 2030. That’s explosive growth, and it’s not just being driven by technology enthusiasts dreaming about the future. It’s being driven by real, grinding problems, surgical training bottlenecks, clinician burnout, patient education gaps, and a healthcare system straining under its own complexity.

So what does this growth mean for you—the UX designer, the product manager, the digital health professional trying to figure out where AR actually fits? It means the decisions you make about interaction models, information hierarchy, cognitive load, and safety design in AR environments are suddenly among the most consequential design decisions in the world. Let’s dig into where this is going, what’s working, what’s failing, and what the future actually looks like.

Surgical Precision and the New Operating Theater UX

When Information Becomes Part of the Procedure

The operating room is arguably the most extreme UX environment on earth. A surgeon’s hands are occupied, their eyes are locked on the patient, and every second counts. Traditional UX paradigms—tap a button, scroll a list, glance at a monitor—simply don’t work here. AR changes the fundamental interaction model by bringing information into the surgeon’s primary field of view without requiring them to look away from what matters most.

Medivis, a New York-based surgical AR company, has built a platform that allows surgeons to view patient-specific CT and MRI data as 3D holograms overlaid directly onto the operating field. The UX challenge is not just rendering the image correctly; it’s figuring out what to show, when to show it, and how to make it dismissible without breaking the surgical flow. Designing for these environments means thinking about gaze-based interaction, voice commands, and foot pedal controls in ways that most designers have never considered before. The input modalities are entirely different from anything in a conventional design toolkit.

Research published in the Journal of Medical Systems found that AR-assisted surgeries showed measurable improvements in accuracy for procedures like pedicle screw placement in spinal surgery—a procedure where a millimeter of error can mean paralysis. But accuracy isn’t just about the AR rendering being technically correct. It’s about the UX being transparent enough that the surgeon trusts the overlay, understands its limitations, and knows exactly when they’re looking at real tissue versus projected data. Designing for trust in life-or-death environments is a whole new frontier, and it demands a level of rigor and humility that the tech industry hasn’t always demonstrated.

Managing Cognitive Load Under Pressure

Here’s the paradox of surgical AR: the goal is to reduce cognitive load by surfacing relevant information at the right moment, but poorly designed AR can catastrophically increase cognitive load by flooding the field of view with noise. Think of it like the difference between a well-designed car dashboard and a cockpit with every warning light flashing simultaneously. Both show you information. Only one helps you drive.

The concept of “attentional narrowing,” well-documented in human factors research, tells us that under stress, people focus on fewer stimuli. A surgeon under pressure isn’t going to parse a complex holographic interface. They need information that’s immediately legible, contextually relevant, and visually distinct from the surgical environment itself. Color theory, depth cues, contrast ratios, and animation timing all take on life-or-death significance in this context. You’re not designing for someone scrolling comfortably on a couch. You’re designing for someone whose hands are inside another human being.

Companies like Proprio are tackling this by using AI to dynamically filter what the AR system displays based on the phase of the procedure. Instead of displaying all information continuously, the system learns which details are relevant during incision, closure, and navigation. This is adaptive UX at its most sophisticated—the interface itself is intelligent enough to know when to step back. For UX designers entering this space, this is a masterclass in the principle of progressive disclosure, scaled to a context where every design decision has direct physiological consequences for a patient on a table.

Medical Training and Education: Replacing the Cadaver Lab

Building Clinical Intuition Without Patients

Medical education has a dirty secret: learning to operate on humans has traditionally required practicing on humans. The apprenticeship model—watch one, do one, teach one—has its obvious ethical complexities. AR is beginning to change the calculus by offering medical students and residents a middle ground between textbook diagrams and live patients. And the UX of these training systems is going to determine whether the next generation of physicians is better or worse prepared than the one before.

Companies like Immersive Touch and 3D Systems have developed AR simulation platforms that allow trainees to practice procedures like epidural injections and orthopedic surgeries with haptic feedback and real-time performance metrics. The UX challenge here is creating a sense of genuine consequence within a safe environment—making the simulation feel real enough to build genuine clinical intuition while making the feedback loops clear enough to accelerate learning. That’s a razor-thin balance. Too gamified, and trainees develop unrealistic expectations. Too sterile, and the emotional engagement required for deep learning evaporates.

Anatomy learning is another area where AR is showing extraordinary early promise. The Human Anatomy Atlas app by Visible Body has over 10 million users and allows students to explore 3D models of every system in the human body. But the real UX breakthrough isn’t the 3D model—it’s the contextual layering. Students can strip away the muscular system to see the circulatory system beneath or rotate a structure to understand spatial relationships that simply don’t exist in a 2D textbook. This kind of interactive spatial learning aligns with dual coding theory from cognitive psychology, which tells us that information is better retained when it’s presented both visually and spatially rather than just as text or static images.

Designing Feedback Systems That Actually Teach

The most overlooked UX element in medical training AR is feedback design. In a real procedure, feedback is immediate and brutally honest—the patient either bleeds or doesn’t. In a simulation, you have to design feedback that’s honest enough to be instructive but structured enough to prevent learner demoralization. This is genuinely difficult UX work that sits at the intersection of instructional design, behavioral psychology, and interface design.

Effective AR training systems need to deliver feedback at multiple levels simultaneously—immediate tactile and visual cues during the procedure, plus reflective post-session analytics that help trainees understand patterns in their performance over time. Think of it like having a flight simulator that doesn’t just crash when you make an error but shows you a replay of every micro-decision that led to the crash. Building that kind of multi-layered feedback architecture requires designers to consider time differently, not just the experience in the moment but the learning journey across dozens of sessions over months.

The gamification question is worth lingering on. There’s a real tension in medical training UX between using game design principles to boost engagement and maintaining the gravitas appropriate to a field where the skills being learned will eventually affect real lives. The best solutions thread this needle by using progress mechanics and mastery frameworks without trivializing clinical scenarios. Leaderboards that show time-to-completion for a simulated surgery feel wrong. Competency benchmarks tied to clinical skill standards feel right. The design choices around how you frame achievement in medical training AR aren’t just UX decisions—they’re ethical ones.

Patient Experience and the AR-Powered Consultation

Turning “Trust Me, I’m a Doctor” Into “Look, Here’s What’s Happening”

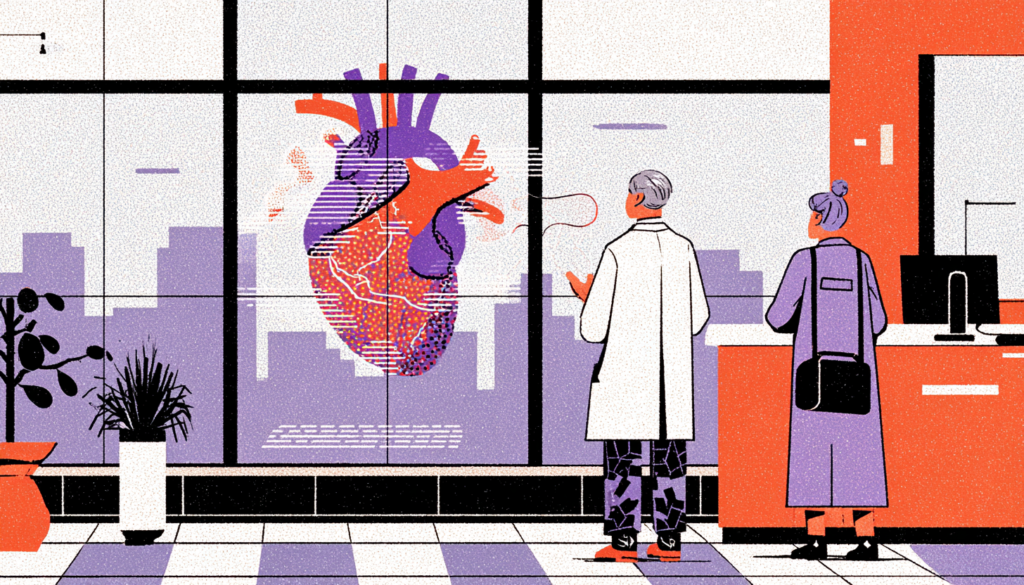

There’s a fundamental power imbalance in most clinical consultations. The physician has the knowledge. The patient has their body. AR has the potential to collapse that gap in ways that could transform patient engagement, informed consent, and ultimately clinical outcomes. When a patient can actually see their tumor, understand its relationship to surrounding structures, and watch an animation of how the proposed surgery will unfold—that’s not just a better experience. That’s genuinely better medicine.

Research consistently shows that patients who understand their diagnosis and treatment plan have better outcomes. A 2021 study in Patient Education and Counseling found that visual aids significantly improved comprehension of complex medical information compared to verbal explanation alone. Now imagine those visual aids are not static pamphlets but dynamic, personalized 3D reconstructions of the patient’s own imaging data, manipulable in real space during the consultation. That’s the direction AR is pointing, and companies like Echopixel have already built early versions of this for radiology consultations.

The UX design challenge for patient-facing AR is entirely different from clinical AR. You’re no longer designing for trained professionals with deep domain knowledge—you’re designing for anxious people who may be receiving frightening news and who have wildly varying levels of health literacy. Every interaction needs to be intuitive enough for a 70-year-old with no tech experience while being substantive enough to genuinely educate. The information architecture has to balance completeness with clarity, and the emotional tone of the visual design carries enormous weight. Color choices, animation pacing, and the degree of anatomical realism all need to be tested with real patients across demographic groups, not just assumed.

Accessibility and the Equity Question Nobody Is Asking Loudly Enough

Here’s something that doesn’t get nearly enough attention in the excitement around AR in healthcare: who gets access to this technology, and what happens to the quality of care for those who don’t? AR hardware remains expensive. HoloLens 2 retails at around $3,500. Even more affordable AR solutions require devices and connectivity that not everyone has. If AR-enhanced consultations, training, and surgical guidance become standard in well-funded urban hospitals while rural and underfunded facilities continue operating without them, we risk creating a two-tier healthcare system stratified by technological access.

UX designers and product managers working in this space have a responsibility to push back against the assumption that premium technology automatically reaches everyone who needs it. Designing for accessibility in AR healthcare means considering cost architecture, offline functionality, and hardware-agnostic design approaches that allow core experiences to degrade gracefully on lower-end devices. It means user testing with elderly populations, non-English speakers, and people with visual or motor impairments, groups who are often the last to be considered and the first to be harmed by poorly designed healthcare technology.

The equity question also applies to clinical training. If AR simulation labs exist primarily at elite medical schools, the pipeline of well-prepared clinicians entering underserved communities doesn’t improve. The UX community can advocate for design approaches that make these tools more accessible, not just more impressive for those who can afford them.

The Safety Design Challenge: When AR Goes Wrong

Designing for Failure Before It Happens

Every technology fails. Screens crash. Networks drop. Sensors drift. In most contexts, a software failure is an inconvenience. In an AR-guided surgical procedure or a medication delivery system overlaid with AR guidance, a failure can be catastrophic. The UX of failure states, error handling, and graceful degradation in healthcare AR isn’t a secondary concern; it belongs at the center of the design process from day one.

The field of human factors engineering has developed robust frameworks for designing fail-safe systems in aviation, nuclear power, and aerospace. Healthcare AR desperately needs to import these frameworks and adapt them to the specific challenges of the clinical environment. What happens when the AR overlay loses tracking mid-procedure? What visual cue tells the surgeon to disregard the hologram and rely on direct vision? How does the system communicate uncertainty in its data—because an AR overlay that presents imprecise information with perfect visual confidence is more dangerous than no overlay at all?

Researchers at the Stanford Byers Center for Biodesign have been exploring these questions, and the emerging consensus points toward explicit uncertainty visualization as a core design principle. Rather than rendering AR overlays as solid, photorealistic structures, systems should visually encode confidence levels, perhaps through transparency, edge definition, or color saturation—so that clinicians have an immediate, intuitive sense of how much to trust what they’re seeing. This is sophisticated information design work that requires collaboration between UX designers, clinical informaticists, and human factors engineers in ways the industry hasn’t always prioritized.

Regulatory Reality and the UX of Compliance

The FDA has regulatory authority over medical devices, and AR systems used in clinical settings are increasingly being classified as Software as a Medical Device (SaMD). This means that the UX decisions you make aren’t just evaluated by users—they’re evaluated by regulators seeking evidence that your design minimizes risk of harm. The FDA’s guidance on human factors engineering requires manufacturers to conduct usability testing that specifically identifies use-related hazards, scenarios where the design of the interface itself could lead to clinical errors.

This regulatory environment makes a strong case for investing in UX research in healthcare AR—it’s not just a good idea, it’s the law. But the specificity of what’s required goes far beyond typical usability testing. You need to identify critical tasks, simulate realistic stress conditions, test with representative user populations, and document everything in a format that aligns with FDA Human Factors guidance. For most UX teams trained in agile product development, this level of rigor feels foreign and slow. But slowing down to get safety design right in healthcare AR isn’t bureaucratic friction—it’s the point.

The tension between the rapid iteration culture of tech and the methodical validation requirements of medical device regulation is one of the defining challenges for product teams building in this space. Companies that learn to conduct quick user experience research within regulatory limits, using simulation-based usability testing instead of waiting for clinical trials to find issues, will have a big edge over their competitors and act more ethically. Speed and safety aren’t mutually exclusive. But they require a different kind of design discipline than most product teams currently practice.

The future of AR in healthcare UX is simultaneously more exciting and more demanding than most of the breathless technology coverage would have you believe. The possibilities are real, and the initial results are strong—improved surgery results, better training, more informed patients, and clinical processes that actually fit how users think and work. But realizing that potential requires a design community willing to operate at a level of rigor, humility, and cross-disciplinary collaboration that goes far beyond shipping a beautiful interface. The UX decisions being made in AR healthcare labs and product studios right now will shape clinical practice for decades. If you’re working in this space or thinking about entering it, the question isn’t whether this technology will matter—it’s whether you’ll be one of the designers who gets it right.